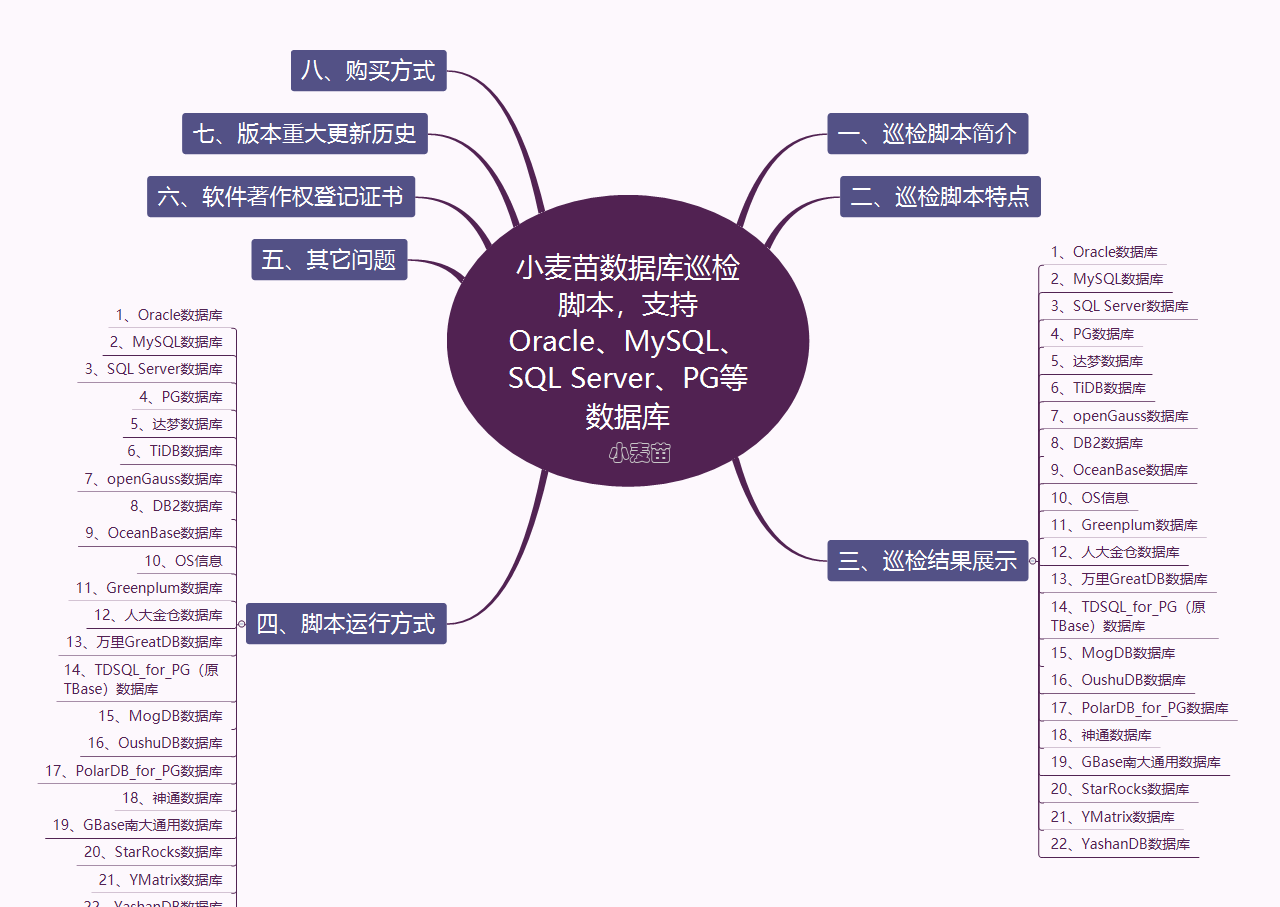

合 GreenPlum 7.1.0新特性介绍

- 简介

- GreenPlum 7.1.0环境准备

- 新特性实验

- VMware Greenplum 7.1.0引入了tablefunc模块,提供了各种返回表的函数示例,包括行转列等功能

- 新增pg_buffercache和gp_buffercache视图

- 孤儿文件相关

- 分区表相关

- pg_filedump程序

- 故障恢复gprecoverseg

- EXPLAIN ANALYZE增强

- gppkg增强

- 系统视图gp_stat_progress_dtx_recovery

- log_directory配置日志位置

- 新增optimizer_enable_right_outer_join服务器配置参数

- VACUUM命令现在包含了SKIP_DATABASE_STATS和ONLY_DATABASE_STATS子句

- pg_config命令的输出现在包括了Greenplum版本信息。

- 全部新特性原文

- Release 7.1.0

- New and Changed Features

- 参考

简介

GreenPlum 7.0.0于2023-09-28发布,大约半年后,GreenPlum 7.1.0于2024-02-09发布。

在本文中,麦老师就其中一些比较实用的新特性做一些简单说明。

GreenPlum 7.1.0环境准备

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 | docker rm -f gpdb7 docker run -itd --name gpdb7 -h gpdb7 \ -p 5437:5432 -p 28087:28080 \ -v /sys/fs/cgroup:/sys/fs/cgroup \ --privileged=true lhrbest/greenplum:7.1.0 \ /usr/sbin/init docker exec -it gpdb7 bash su - gpadmin gpstart -a gpcc start gpcc status gpstate |

此docker包括1个master,1个standby master,2个segment,2个mirror实例;还包括gpcc 7.0.0

新特性实验

VMware Greenplum 7.1.0引入了tablefunc模块,提供了各种返回表的函数示例,包括行转列等功能

tablefunc模块包括多个返回表(也就是多行)的函数。这些函数都很有用,并且也可以作为如何编写返回多行的 C 函数的例子。

示例可以参考:https://www.postgresql.org/docs/12/tablefunc.html

http://postgres.cn/docs/12/tablefunc.html

| 函数 | 返回 | 描述 |

|---|---|---|

normal_rand(int numvals, float8 mean, float8 stddev) | setof float8 | 产生一个正态分布的随机值集合 |

crosstab(text sql) | setof record | 产生一个包含行名称外加N个值列的“数据透视表”,其中N由调用查询中指定的行类型决定 |

crosstab*N*(text sql) | setof table_crosstab_*N* | 产生一个包含行名称外加N个值列的“数据透视表”。crosstab2、crosstab3和crosstab4是被预定义的,但你可以按照下文所述创建额外的crosstab*N*函数 |

crosstab(text source_sql, text category_sql) | setof record | 产生一个“数据透视表”,其值列由第二个查询指定 |

crosstab(text sql, int N) | setof record | crosstab(text)的废弃版本。参数N现在被忽略,因为值列的数量总是由调用查询所决定 |

connectby(text relname, text keyid_fld, text parent_keyid_fld [, text orderby_fld ], text start_with, int max_depth [, text branch_delim ]) | setof record | 产生一个层次树结构的表达 |

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 147 148 149 150 151 152 153 154 155 156 | db1=# CREATE EXTENSION tablefunc; CREATE EXTENSION db1=# SELECT * FROM normal_rand(1000, 5, 3); normal_rand ---------------------- 2.3210274434791187 1.231076402857033 -0.8117263529261152 -1.2934824713330597 8.292221876591267 3.804515144372151 1.9176029752768766 7.146218652634886 3.551605912228543 5.575493201208664 6.666709079414525 2.5228426084040176 6.407538689302069 5.8016036456658995 4.277014091604118 5.780894470091546 5.750904724932745 5.753381245096707 2.4427467584795792 6.81576512005292 8.192744936276732 6.614708709243898 8.77794265411034 5.791113475048419 5.70369412214234 4.327753473864319 7.570550167961118 3.5597661002608407 8.046435727461073 9.658108512543121 6.470092796527577 7.666408022086054 db1=# db1=# db1=# db1=# CREATE TABLE ct(id SERIAL, rowid TEXT, attribute TEXT, value TEXT); NOTICE: Table doesn't have 'DISTRIBUTED BY' clause -- Using column named 'id' as the Greenplum Database data distribution key for this table. HINT: The 'DISTRIBUTED BY' clause determines the distribution of data. Make sure column(s) chosen are the optimal data distribution key to minimize skew. INSERT INTO ct(rowid, attribute, value) VALUES('test1','att1','val1'); CREATE TABLE db1=# INSERT INTO ct(rowid, attribute, value) VALUES('test1','att1','val1'); INSERT 0 1 db1=# INSERT INTO ct(rowid, attribute, value) VALUES('test1','att2','val2'); INSERT 0 1 db1=# INSERT INTO ct(rowid, attribute, value) VALUES('test1','att3','val3'); INSERT 0 1 db1=# INSERT INTO ct(rowid, attribute, value) VALUES('test1','att4','val4'); INSERT 0 1 db1=# INSERT INTO ct(rowid, attribute, value) VALUES('test2','att1','val5'); INSERT 0 1 db1=# INSERT INTO ct(rowid, attribute, value) VALUES('test2','att2','val6'); INSERT 0 1 db1=# INSERT INTO ct(rowid, attribute, value) VALUES('test2','att3','val7'); INSERT 0 1 db1=# INSERT INTO ct(rowid, attribute, value) VALUES('test2','att4','val8'); INSERT 0 1 db1=# db1=# SELECT * db1-# FROM crosstab( db1(# 'select rowid, attribute, value db1'# from ct db1'# where attribute = ''att2'' or attribute = ''att3'' db1'# order by 1,2') db1-# AS ct(row_name text, category_1 text, category_2 text, category_3 text); row_name | category_1 | category_2 | category_3 ----------+------------+------------+------------ test1 | val2 | val3 | test2 | val6 | val7 | (2 rows) db1=# create table sales(year int, month int, qty int); NOTICE: Table doesn't have 'DISTRIBUTED BY' clause -- Using column named 'year' as the Greenplum Database data distribution key for this table. HINT: The 'DISTRIBUTED BY' clause determines the distribution of data. Make sure column(s) chosen are the optimal data distribution key to minimize skew. CREATE TABLE db1=# insert into sales values(2007, 1, 1000); INSERT 0 1 db1=# insert into sales values(2007, 2, 1500); INSERT 0 1 db1=# insert into sales values(2007, 7, 500); INSERT 0 1 db1=# insert into sales values(2007, 11, 1500); INSERT 0 1 db1=# insert into sales values(2007, 12, 2000); INSERT 0 1 db1=# insert into sales values(2008, 1, 1000); INSERT 0 1 db1=# db1=# select * from crosstab( db1(# 'select year, month, qty from sales order by 1', db1(# 'select m from generate_series(1,12) m' db1(# ) as ( db1(# year int, db1(# "Jan" int, db1(# "Feb" int, db1(# "Mar" int, db1(# "Apr" int, db1(# "May" int, db1(# "Jun" int, db1(# "Jul" int, db1(# "Aug" int, db1(# "Sep" int, db1(# "Oct" int, db1(# "Nov" int, db1(# "Dec" int db1(# ); year | Jan | Feb | Mar | Apr | May | Jun | Jul | Aug | Sep | Oct | Nov | Dec ------+------+------+-----+-----+-----+-----+-----+-----+-----+-----+------+------ 2007 | 1000 | 1500 | | | | | 500 | | | | 1500 | 2000 2008 | 1000 | | | | | | | | | | | (2 rows) db1=# CREATE TABLE cth(rowid text, rowdt timestamp, attribute text, val text); NOTICE: Table doesn't have 'DISTRIBUTED BY' clause -- Using column named 'rowid' as the Greenplum Database data distribution key for this table. HINT: The 'DISTRIBUTED BY' clause determines the distribution of data. Make sure column(s) chosen are the optimal data distribution key to minimize skew. CREATE TABLE db1=# INSERT INTO cth VALUES('test1','01 March 2003','temperature','42'); INSERT 0 1 db1=# INSERT INTO cth VALUES('test1','01 March 2003','test_result','PASS'); INSERT 0 1 db1=# INSERT INTO cth VALUES('test1','01 March 2003','volts','2.6987'); INSERT 0 1 db1=# INSERT INTO cth VALUES('test2','02 March 2003','temperature','53'); INSERT 0 1 db1=# INSERT INTO cth VALUES('test2','02 March 2003','test_result','FAIL'); INSERT 0 1 db1=# INSERT INTO cth VALUES('test2','02 March 2003','test_startdate','01 March 2003'); INSERT 0 1 db1=# INSERT INTO cth VALUES('test2','02 March 2003','volts','3.1234'); INSERT 0 1 db1=# db1=# SELECT * FROM crosstab db1-# ( db1(# 'SELECT rowid, rowdt, attribute, val FROM cth ORDER BY 1', db1(# 'SELECT DISTINCT attribute FROM cth ORDER BY 1' db1(# ) db1-# AS db1-# ( db1(# rowid text, db1(# rowdt timestamp, db1(# temperature int4, db1(# test_result text, db1(# test_startdate timestamp, db1(# volts float8 db1(# ); rowid | rowdt | temperature | test_result | test_startdate | volts -------+---------------------+-------------+-------------+---------------------+-------- test1 | 2003-03-01 00:00:00 | 42 | PASS | | 2.6987 test2 | 2003-03-02 00:00:00 | 53 | FAIL | 2003-03-01 00:00:00 | 3.1234 (2 rows) db1=# |

新增pg_buffercache和gp_buffercache视图

VMware Greenplum包括一个新的扩展程序 - pg_buffercache -,允许用户访问五个视图以获取集群范围的共享缓冲区指标:gp_buffercache、gp_buffercache_summary、gp_buffercache_usage_counts、gp_buffercache_summary_aggregated和gp_buffercache_usage_counts_aggregated。

该特性在GreenPlum 6.26.2中已提供,不过提供的视图较少。可以参考:https://www.dbaup.com/greenplum-6262banbenxintexingshuoming.html

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 | [gpadmin@gpdb7 ~]$ psql psql (12.12) Type "help" for help. postgres=# select version(); version ---------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- PostgreSQL 12.12 (Greenplum Database 7.1.0 build commit:e7c2b1f14bb42a1018ac57d14f4436880e0a0515) on x86_64-pc-linux-gnu, compiled by gcc (GCC) 8.5.0 20210514 (Red Hat 8.5.0-18), 64-bit compiled on Jan 19 2024 06:39:45 Bhuvnesh C. (1 row) postgres=# create database db1; CREATE DATABASE postgres=# \c db1 You are now connected to database "db1" as user "gpadmin". db1=# create extension pg_buffercache; CREATE EXTENSION db1=# select count(*) from gp_buffercache; count ------- 12000 (1 row) db1=# select count(*) from pg_buffercache; count ------- 4000 (1 row) db1=# select * from gp_buffercache limit 6; gp_segment_id | bufferid | relfilenode | reltablespace | reldatabase | relforknumber | relblocknumber | isdirty | usagecount | pinning_backends ---------------+----------+-------------+---------------+-------------+---------------+----------------+---------+------------+------------------ -1 | 1 | 13721 | 1664 | 0 | 0 | 0 | f | 5 | 0 -1 | 2 | 1259 | 1663 | 13720 | 0 | 0 | f | 5 | 0 -1 | 3 | 1259 | 1663 | 13720 | 0 | 1 | f | 5 | 0 -1 | 4 | 1249 | 1663 | 13720 | 0 | 0 | f | 5 | 0 -1 | 5 | 1249 | 1663 | 13720 | 0 | 1 | f | 5 | 0 -1 | 6 | 1249 | 1663 | 13720 | 0 | 2 | f | 5 | 0 (6 rows) db1=# db1=# SELECT n.nspname, c.relname, count(*) AS buffers db1-# FROM pg_buffercache b JOIN pg_class c db1-# ON b.relfilenode = pg_relation_filenode(c.oid) AND db1-# b.reldatabase IN (0, (SELECT oid FROM pg_database db1(# WHERE datname = current_database())) db1-# JOIN pg_namespace n ON n.oid = c.relnamespace db1-# GROUP BY n.nspname, c.relname db1-# ORDER BY 3 DESC db1-# LIMIT 10; nspname | relname | buffers ------------+--------------------------------+--------- pg_catalog | pg_proc | 14 pg_catalog | pg_depend_reference_index | 13 pg_catalog | pg_attribute | 12 pg_catalog | pg_depend | 11 pg_catalog | pg_class | 11 pg_catalog | pg_rewrite | 7 pg_catalog | pg_type | 7 pg_catalog | pg_proc_proname_args_nsp_index | 7 pg_catalog | pg_init_privs | 6 pg_catalog | pg_authid | 5 (10 rows) db1=# select count(*) from gp_buffercache_summary; count ------- 3 (1 row) db1=# select * from gp_buffercache_summary; gp_segment_id | buffers_used | buffers_unused | buffers_dirty | buffers_pinned | usagecount_avg ---------------+--------------+----------------+---------------+----------------+-------------------- -1 | 1562 | 2438 | 120 | 0 | 3.881562099871959 0 | 1489 | 2511 | 117 | 0 | 3.4976494291470788 1 | 1493 | 2507 | 119 | 0 | 3.495646349631614 (3 rows) db1=# select * from gp_buffercache_usage_counts; gp_segment_id | usage_count | buffers | dirty | pinned ---------------+-------------+---------+-------+-------- -1 | 0 | 2438 | 0 | 0 -1 | 1 | 228 | 5 | 0 -1 | 2 | 240 | 8 | 0 -1 | 3 | 49 | 8 | 0 -1 | 4 | 17 | 1 | 0 -1 | 5 | 1028 | 98 | 0 0 | 0 | 2509 | 0 | 0 0 | 1 | 444 | 6 | 0 0 | 2 | 123 | 6 | 0 0 | 3 | 39 | 7 | 0 0 | 4 | 17 | 2 | 0 0 | 5 | 868 | 97 | 0 1 | 0 | 2505 | 0 | 0 1 | 1 | 446 | 6 | 0 1 | 2 | 123 | 6 | 0 1 | 3 | 39 | 7 | 0 1 | 4 | 18 | 2 | 0 1 | 5 | 869 | 100 | 0 (18 rows) db1=# select * from gp_buffercache_summary_aggregated; buffers_used | buffers_unused | buffers_dirty | buffers_pinned | usagecount_avg --------------+----------------+---------------+----------------+------------------- 4550 | 7450 | 359 | 0 | 3.625432361146132 (1 row) db1=# select * from gp_buffercache_usage_counts_aggregated; usage_count | buffers | dirty | pinned -------------+---------+-------+-------- 45 | 12000 | 359 | 0 (1 row) db1=# |

孤儿文件相关

gp_toolkit模式中的gp_check_orphaned_files视图包含一个新列 - filepath -,用于打印孤立文件的相对/绝对路径。

VMware Greenplum 7.1.0在gp_toolkit管理模式中添加了gp_move_orphaned_files用户定义函数(UDF),该函数将gp_check_orphaned_files视图找到的孤立文件移动到您指定的文件系统位置。

1 2 3 4 5 6 | select * from gp_toolkit.gp_check_orphaned_files; select * from gp_toolkit.gp_check_missing_files; select * from gp_toolkit.gp_check_missing_files_ext; SELECT * FROM gp_toolkit.gp_move_orphaned_files('/home/gpadmin/orphaned'); |

分区表相关

gp_toolkit管理模式现在包括一些用于辅助分区维护的对象:一个新视图 - gp_partitions,以及几个新的用户定义函数,包括:pg_partition_rank()、pg_partition_range_from()、pg_partition_range_to()、pg_partition_bound_value()、pg_partition_isdefault()、pg_partition_lowest_child()和pg_partition_highest_child()。有关详细信息,请参阅gp_toolkit管理模式主题。

可以参考:https://docs.vmware.com/en/VMware-Greenplum/7/greenplum-database/ref_guide-gp_toolkit.html

pg_filedump程序

VMware Greenplum引入了一个新实用程序 - pg_filedump -,允许您读取格式化内容的VMware Greenplum数据文件,包括表、索引和控制文件。

The pg_filedump utility formats VMware Greenplum data files -- including table, index and control files -- into a human-readable format.

To use pg_filedump, you must have:

gpsupport1.0.3 or higher installed- a search path that includes the

gpsupportexecutable path

NOTE

pg_filedumpis currently only supported for Greenplum 7 data files.

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 | [gpadmin@gpdb7 18444]$ pg_filedump 9926 ******************************************************************* * PostgreSQL File/Block Formatted Dump Utility * * File: 9926 * Options used: None ******************************************************************* Block 0 ******************************************************** <Header> ----- Block Offset: 0x00000000 Offsets: Lower 64 (0x0040) Block: Size 32768 Version 14 Upper 32752 (0x7ff0) LSN: logid 0 recoff 0x046f5240 Special 32752 (0x7ff0) Items: 10 Free Space: 32688 Checksum: 0x0496 Prune XID: 0x00000000 Flags: 0x0000 () Length (including item array): 64 BTree Meta Data: Magic (0x00053162) Version (4) Root: Block (0) Level (0) FastRoot: Block (0) Level (0) <Special Section> ----- BTree Index Section: Flags: 0x0008 (META) Blocks: Previous (0) Next (0) Level (0) CycleId (0) *** End of File Encountered. Last Block Read: 0 *** [gpadmin@gpdb7 mirror]$ find ./ -name pg_control ./gpseg0/global/pg_control ./gpseg1/global/pg_control [gpadmin@gpdb7 mirror]$ pg_filedump -c ./gpseg0/global/pg_control ******************************************************************* * PostgreSQL File/Block Formatted Dump Utility * * File: ./gpseg0/global/pg_control * Options used: -c ******************************************************************* <pg_control Contents> ********************************************* CRC: Correct pg_control Version: 12010700 Catalog Version: 302307241 System Identifier: 7287791898375007577 State: IN ARCHIVE RECOVERY Last Mod Time: Sun Feb 18 11:11:48 2024 Last Checkpoint Record: Log File (0) Offset (0x0cf18ca8) Last Checkpoint Record Redo: Log File (0) Offset (0x0cf18b50) |- TimeLineID: 1 |- Next XID: 0/2060 |- Next OID: 26549 |- Next Relfilenode: 25699 |- Next Multi: 1 |- Next MultiOff: 0 |- Time: Sun Feb 18 11:11:48 2024 Minimum Recovery Point: Log File (0) Offset (0x0cfa18c0) Backup Start Record: Log File (0) Offset (0x00000000) Backup End Record: Log File (0) Offset (0x00000000) End-of-Backup Record Required: no Maximum Data Alignment: 8 Floating-Point Sample: 1234567 Database Block Size: 32768 Blocks Per Segment: 32768 XLOG Block Size: 32768 XLOG Segment Size: 67108864 Maximum Identifier Length: 64 Maximum Index Keys: 32 TOAST Chunk Size: 8140 |

故障恢复gprecoverseg

当使用输入配置文件(gprecoverseg -i)时,VMware Greenplum现在支持差异段恢复。此外,您现在可以在传递给gprecoverseg -i的recover_config_file中的条目之前添加I、D或F来指示段恢复的类型。在 GreenPlum 6.25.0中也提供了差异化恢复

1 2 3 4 | recoveryType field supports below values: I/i for incremental recovery D/d for differential recovery F/f for full recovery |

EXPLAIN ANALYZE增强

当使用BUFFERS关键字时,EXPLAIN ANALYZE现在显示缓冲区使用情况和I/O时间。

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 | postgres=# \h explain analyze Command: EXPLAIN Description: show the execution plan of a statement Syntax: EXPLAIN [ ( option [, ...] ) ] statement EXPLAIN [ ANALYZE ] [ VERBOSE ] statement where option can be one of: ANALYZE [ boolean ] VERBOSE [ boolean ] COSTS [ boolean ] SETTINGS [ boolean ] BUFFERS [ boolean ] TIMING [ boolean ] SUMMARY [ boolean ] FORMAT { TEXT | XML | JSON | YAML } URL: https://www.postgresql.org/docs/12/sql-explain.html postgres=# EXPLAIN (ANALYZE) select * from pg_tables; QUERY PLAN --------------------------------------------------------------------------------------------------------------------------- Hash Left Join (cost=2.25..19.63 rows=91 width=260) (actual time=0.181..0.407 rows=91 loops=1) Hash Cond: (c.reltablespace = t.oid) Extra Text: Hash chain length 1.0 avg, 1 max, using 2 of 65536 buckets. -> Hash Left Join (cost=1.20..17.15 rows=91 width=140) (actual time=0.114..0.280 rows=91 loops=1) Hash Cond: (c.relnamespace = n.oid) Extra Text: Hash chain length 1.0 avg, 1 max, using 9 of 65536 buckets. -> Seq Scan on pg_class c (cost=0.00..14.80 rows=91 width=80) (actual time=0.043..0.125 rows=91 loops=1) Filter: (relkind = ANY ('{r,p}'::"char"[])) Rows Removed by Filter: 533 -> Hash (cost=1.09..1.09 rows=9 width=68) (actual time=0.009..0.010 rows=9 loops=1) Buckets: 65536 Batches: 1 Memory Usage: 513kB -> Seq Scan on pg_namespace n (cost=0.00..1.09 rows=9 width=68) (actual time=0.004..0.005 rows=9 loops=1) -> Hash (cost=1.02..1.02 rows=2 width=68) (actual time=0.004..0.004 rows=2 loops=1) Buckets: 65536 Batches: 1 Memory Usage: 513kB -> Seq Scan on pg_tablespace t (cost=0.00..1.02 rows=2 width=68) (actual time=0.002..0.003 rows=2 loops=1) Optimizer: Postgres-based planner Planning Time: 0.783 ms (slice0) Executor memory: 1131K bytes. Work_mem: 513K bytes max. Memory used: 128000kB Execution Time: 0.462 ms (20 rows) postgres=# EXPLAIN (ANALYZE, BUFFERS) select * from pg_tables; QUERY PLAN --------------------------------------------------------------------------------------------------------------------------- Hash Left Join (cost=2.25..19.63 rows=91 width=260) (actual time=0.438..0.726 rows=91 loops=1) Hash Cond: (c.reltablespace = t.oid) Extra Text: Hash chain length 1.0 avg, 1 max, using 2 of 65536 buckets. Buffers: shared hit=9 -> Hash Left Join (cost=1.20..17.15 rows=91 width=140) (actual time=0.149..0.341 rows=91 loops=1) Hash Cond: (c.relnamespace = n.oid) Extra Text: Hash chain length 1.0 avg, 1 max, using 9 of 65536 buckets. Buffers: shared hit=8 -> Seq Scan on pg_class c (cost=0.00..14.80 rows=91 width=80) (actual time=0.060..0.140 rows=91 loops=1) Filter: (relkind = ANY ('{r,p}'::"char"[])) Rows Removed by Filter: 533 Buffers: shared hit=7 -> Hash (cost=1.09..1.09 rows=9 width=68) (actual time=0.012..0.013 rows=9 loops=1) Buckets: 65536 Batches: 1 Memory Usage: 513kB Buffers: shared hit=1 -> Seq Scan on pg_namespace n (cost=0.00..1.09 rows=9 width=68) (actual time=0.005..0.006 rows=9 loops=1) Buffers: shared hit=1 -> Hash (cost=1.02..1.02 rows=2 width=68) (actual time=0.006..0.006 rows=2 loops=1) Buckets: 65536 Batches: 1 Memory Usage: 513kB Buffers: shared hit=1 -> Seq Scan on pg_tablespace t (cost=0.00..1.02 rows=2 width=68) (actual time=0.003..0.004 rows=2 loops=1) Buffers: shared hit=1 Optimizer: Postgres-based planner Planning Time: 0.878 ms (slice0) Executor memory: 1131K bytes. Work_mem: 513K bytes max. Memory used: 128000kB Execution Time: 0.811 ms (27 rows) |

gppkg增强

gppkg实用程序选项 -f 现在可帮助删除具有不完整或缺失文件的软件包。

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 | [gpadmin@gpdb7 gppkg]$ gppkg install MetricsCollector-7.0.0_gp_7.0.0-rocky8-x86_64.gppkg Detecting network topology: [==============================================================] [OK] 2 coordinators and 4 segment instances are detected on 1 unique host. Distributing package: [==============================================================] [OK] Decoding package: [==============================================================] [OK] Verifying package installation:[==============================================================] [OK] Verifying package integrity: [==============================================================] [OK] You are going to install the following packages: Install 'MetricsCollector@7.0.0_gp_7.0.0' Continue? [y/N] y Allocating disk space: [================X ] [ERROR] Cleanup: [==============================================================] [OK] Error: from gpdb7: IoError(file '/usr/local/greenplum-db-7.1.0/lib/postgresql/metrics_collector.so' exists in the filesystem Caused by: entity already exists) [gpadmin@gpdb7 gppkg]$ ll /usr/local/greenplum-db-7.1.0/lib/postgresql/metrics_collector.so -rwxr-xr-x 1 gpadmin gpadmin 3570904 Jan 31 14:51 /usr/local/greenplum-db-7.1.0/lib/postgresql/metrics_collector.so [gpadmin@gpdb7 gppkg]$ gppkg install MetricsCollector-7.0.0_gp_7.0.0-rocky8-x86_64.gppkg -f Detecting network topology: [==============================================================] [OK] 2 coordinators and 4 segment instances are detected on 1 unique host. Distributing package: [==============================================================] [OK] Decoding package: [==============================================================] [OK] Verifying package installation:[==============================================================] [OK] Verifying package integrity: [==============================================================] [OK] You are going to install the following packages: Install 'MetricsCollector@7.0.0_gp_7.0.0' Continue? [y/N] y Allocating disk space: [==============================================================] [OK] Install 'MetricsCollector': [==============================================================] [OK] The stdout from the script of the post-install: ] 0.0 - ========================================================================== Metrics Collector installation is complete! ========================================================================== Running post-install hook: [==============================================================] [OK] Result: MetricsCollector has been successfully installed Clean Up: [==============================================================] [OK] |

系统视图gp_stat_progress_dtx_recovery

系统视图gp_stat_progress_dtx_recovery显示了分布式事务(DTX)恢复过程的进度,这可能对监视协调器崩溃后的恢复状态很有用。

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 | [gpadmin@gpdb7 ~]$ ps -ef|grep post | grep bin gpadmin 1204 1 0 10:15 ? 00:00:01 /usr/local/greenplum-db-7.1.0/bin/postgres -D /opt/greenplum/data/primary/gpseg0 -c gp_role=execute gpadmin 1209 1 0 10:15 ? 00:00:01 /usr/local/greenplum-db-7.1.0/bin/postgres -D /opt/greenplum/data/primary/gpseg1 -c gp_role=execute gpadmin 1243 0 0 10:15 ? 00:00:01 /usr/local/greenplum-db-7.1.0/bin/postgres -D /opt/greenplum/data/master/gpseg-1 -c gp_role=dispatch gpadmin 1393 1 0 10:15 ? 00:00:00 /usr/local/greenplum-db-7.1.0/bin/postgres -D /opt/greenplum/data/master_standby/gpseg-1 -c gp_role=dispatch gpadmin 4525 1 0 10:16 ? 00:00:00 /usr/local/greenplum-db-7.1.0/bin/postgres -D /opt/greenplum/data/mirror/gpseg0 -c gp_role=execute gpadmin 4526 1 0 10:16 ? 00:00:00 /usr/local/greenplum-db-7.1.0/bin/postgres -D /opt/greenplum/data/mirror/gpseg1 -c gp_role=execute [gpadmin@gpdb7 ~]$ kill -9 1209 [gpadmin@gpdb7 ~]$ psql psql (12.12) Type "help" for help. postgres=# select * from gp_stat_progress_dtx_recovery; phase | recover_commited_dtx_total | recover_commited_dtx_completed | in_doubt_tx_total | in_doubt_tx_in_progress | in_doubt_tx_aborted -------+----------------------------+--------------------------------+-------------------+-------------------------+--------------------- (0 rows) postgres=# select * from gp_stat_progress_dtx_recovery; phase | recover_commited_dtx_total | recover_commited_dtx_completed | in_doubt_tx_total | in_doubt_tx_in_progress | in_doubt_tx_aborted ------------------------------------------+----------------------------+--------------------------------+-------------------+-------------------------+--------------------- gathering in-doubt orphaned transactions | 0 | 0 | 0 | 0 | 0 (1 row) postgres=# select * from gp_stat_progress_dtx_recovery; phase | recover_commited_dtx_total | recover_commited_dtx_completed | in_doubt_tx_total | in_doubt_tx_in_progress | in_doubt_tx_aborted -------+----------------------------+--------------------------------+-------------------+-------------------------+--------------------- (0 rows) postgres=# select * from gp_segment_configuration ; dbid | content | role | preferred_role | mode | status | port | hostname | address | datadir ------+---------+------+----------------+------+--------+------+----------+---------+-------------------------------------------- 1 | -1 | p | p | n | u | 5432 | gpdb7 | gpdb7 | /opt/greenplum/data/master/gpseg-1 3 | 1 | m | p | n | d | 6001 | gpdb7 | gpdb7 | /opt/greenplum/data/primary/gpseg1 5 | 1 | p | m | n | u | 7001 | gpdb7 | gpdb7 | /opt/greenplum/data/mirror/gpseg1 6 | -1 | m | m | s | u | 5433 | gpdb7 | gpdb7 | /opt/greenplum/data/master_standby/gpseg-1 2 | 0 | p | p | s | u | 6000 | gpdb7 | gpdb7 | /opt/greenplum/data/primary/gpseg0 4 | 0 | m | m | s | u | 7000 | gpdb7 | gpdb7 | /opt/greenplum/data/mirror/gpseg0 (6 rows) postgres=# |

log_directory配置日志位置

您现在可以使用服务器配置参数log_directory手动配置VMware Greenplum日志的位置。gpsupport实用程序还支持从由此服务器配置参数设置的目录中收集日志。

1 2 3 4 5 6 7 8 9 10 11 12 13 14 | -- GPDB 7.1.0 ,日志默认位于log目录,/opt/greenplum/data/master/gpseg-1/log/ [gpadmin@gpdb7 ~]$ gpconfig -s log_directory Values on all segments are consistent GUC : log_directory Coordinator value: log Segment value: log -- GPDB 6.26,日志默认位于pg_log目录 [gpadmin@gpdb6261 ~]$ gpconfig -s log_directory Values on all segments are consistent GUC : log_directory Master value: pg_log Segment value: pg_log [gpadmin@gpdb6261 ~]$ |

新增optimizer_enable_right_outer_join服务器配置参数

新的optimizer_enable_right_outer_join服务器配置参数允许您控制GPORCA是否生成右外连接。在观察到与右外连接相关的性能不佳的情况下,您可以选择禁止使用它们。 该特性在GreenPlum 6.26.2中已提供。可以参考:https://www.dbaup.com/greenplum-6262banbenxintexingshuoming.html

1 2 3 4 5 6 | [gpadmin@gpdb7 ~]$ gpconfig -s optimizer_enable_right_outer_join Values on all segments are consistent GUC : optimizer_enable_right_outer_join Coordinator value: on Segment value: on [gpadmin@gpdb7 ~]$ |

VACUUM命令现在包含了SKIP_DATABASE_STATS和ONLY_DATABASE_STATS子句

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 | postgres=# \h vacuum Command: VACUUM Description: garbage-collect and optionally analyze a database Syntax: VACUUM [ ( option [, ...] ) ] [ table_and_columns [, ...] ] VACUUM [ FULL ] [ FREEZE ] [ VERBOSE ] [ AO_AUX_ONLY ] [ ANALYZE ] [ table_and_columns [, ...] ] where option can be one of: FULL [ boolean ] FREEZE [ boolean ] VERBOSE [ boolean ] AO_AUX_ONLY [ boolean ] ANALYZE [ boolean ] DISABLE_PAGE_SKIPPING [ boolean ] SKIP_LOCKED [ boolean ] INDEX_CLEANUP [ boolean ] TRUNCATE [ boolean ] SKIP_DATABASE_STATS [ boolean ] ONLY_DATABASE_STATS [ boolean ] and table_and_columns is: table_name [ ( column_name [, ...] ) ] URL: https://www.postgresql.org/docs/12/sql-vacuum.html |